CI] Build and Test XGBoost GPU algorithm with Microsoft Visual Studio 2019 · Issue #7024 · dmlc/xgboost · GitHub

![Szilard [Deeper than Deep Learning] on Twitter: "5/n My recommendations are still: If you don't have a GPU, lightgbm (CPU) is the fastest. If you have a GPU, xgboost (GPU) is also Szilard [Deeper than Deep Learning] on Twitter: "5/n My recommendations are still: If you don't have a GPU, lightgbm (CPU) is the fastest. If you have a GPU, xgboost (GPU) is also](https://pbs.twimg.com/media/D5fkhanUcAAD102.jpg:large)

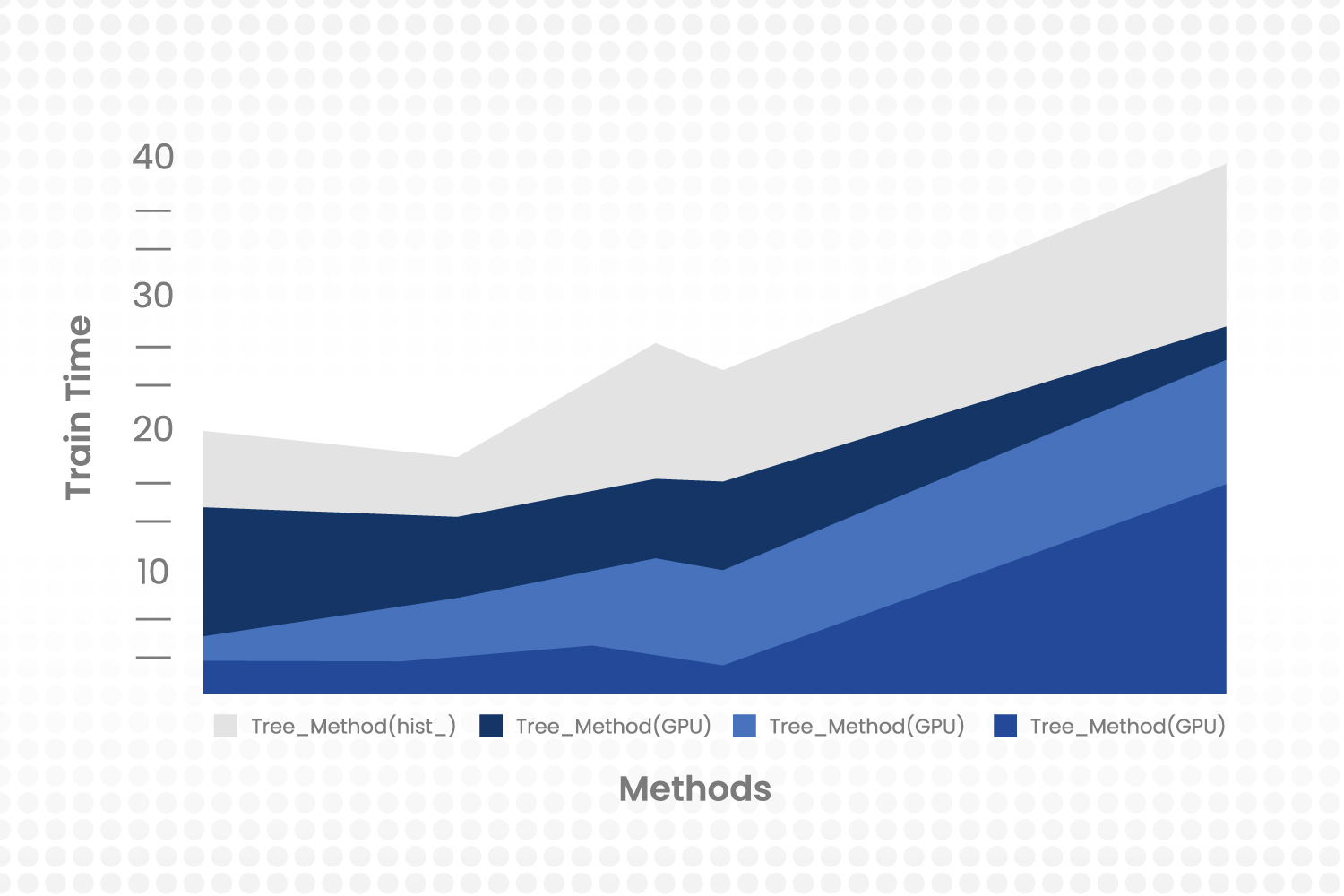

Szilard [Deeper than Deep Learning] on Twitter: "5/n My recommendations are still: If you don't have a GPU, lightgbm (CPU) is the fastest. If you have a GPU, xgboost (GPU) is also

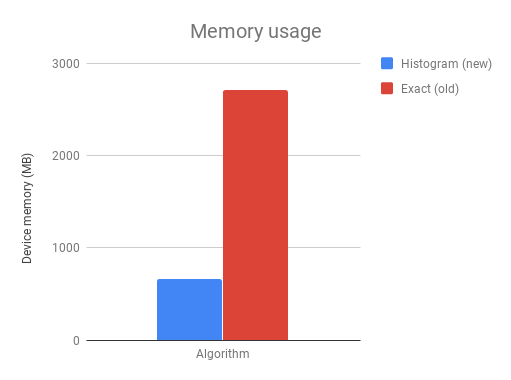

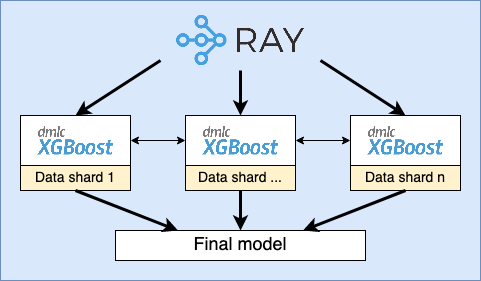

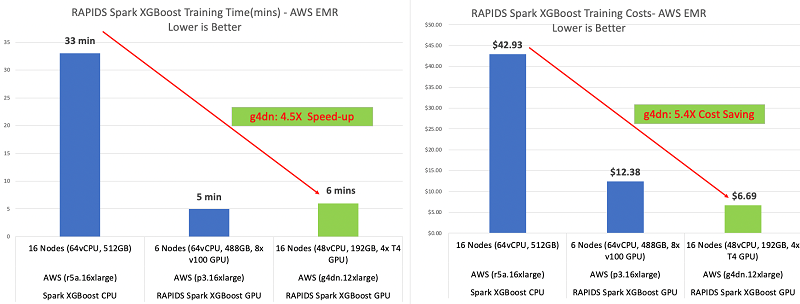

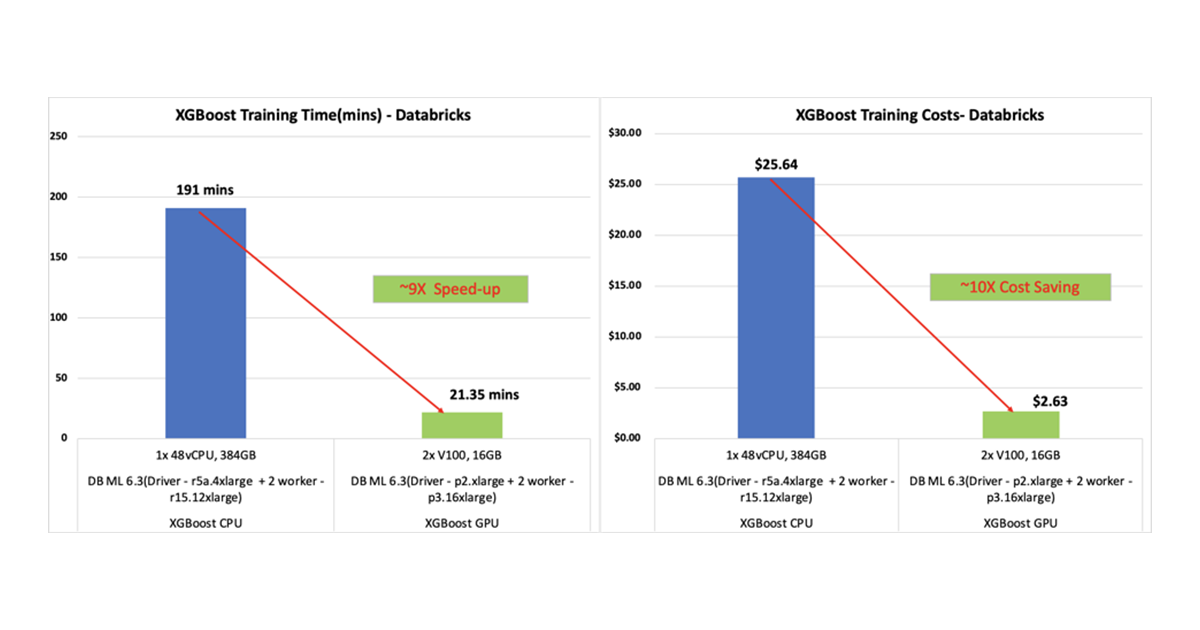

Improving RAPIDS XGBoost performance and reducing costs with Amazon EMR running Amazon EC2 G4 instances | AWS Big Data Blog

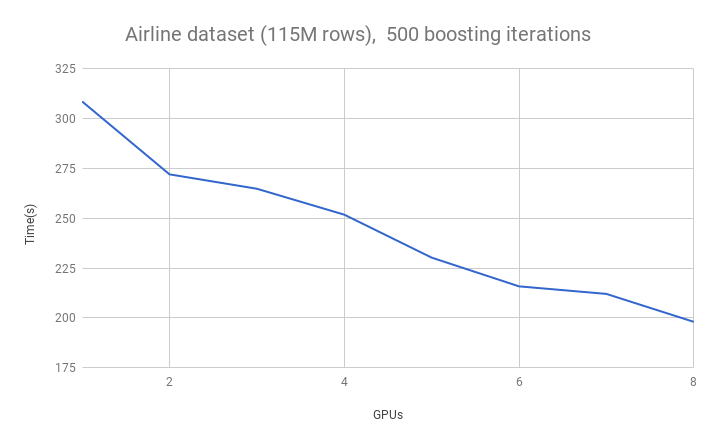

![PDF] XGBoost: Scalable GPU Accelerated Learning | Semantic Scholar PDF] XGBoost: Scalable GPU Accelerated Learning | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/e9b6ab0289cbe7a0a858dea944aafedc617addce/4-Table1-1.png)

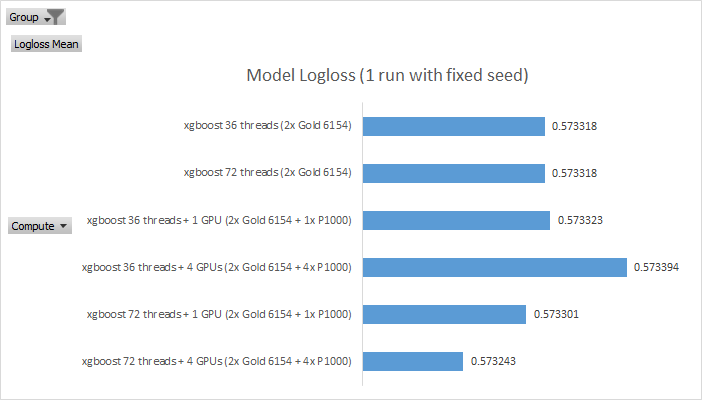

![Accelerating the XGBoost algorithm using GPU computing [PeerJ] Accelerating the XGBoost algorithm using GPU computing [PeerJ]](https://dfzljdn9uc3pi.cloudfront.net/2017/cs-127/1/fig-9-2x.jpg)